What Is an LLM Agent? An Easy-to-Understand Overview from Concepts to Quantitative Investment Applications

This article explains the fundamental concepts and operation principles of LLM agents from a beginner's perspective. It systematically analyzes their core capabilities—such as information retrieval, judgment, and decision execution—as well as their practical applications and limitations in finance and quant investing fields.

What Is an LLM Agent? From Basic Concepts to Quantitative Investment Applications

An LLM agent is a sophisticated AI system that goes beyond simple chatbots, capable of autonomous thinking, planning, and acting to achieve specific goals. It leverages a large language model (LLM) as its ‘brain,’ integrating tools, memory, and planning capabilities to perform complex tasks. This enables AI to handle multi-step instructions like “Analyze this quarter’s financial report and summarize the investment advice” independently.

The importance of LLM agents lies in their scalability and practical usefulness. Pure LLMs face limitations in terms of the timeliness and accuracy of their knowledge, as well as in performing complex logic inference or integrating with external systems. By utilizing external tools such as search APIs, calculators, code execution environments, and databases, agents overcome these constraints. In fields demanding precise information and systematic processes—such as finance, research, and data analysis—LLM agents have the potential to serve as powerful ‘digital assistants.‘

Key Concepts

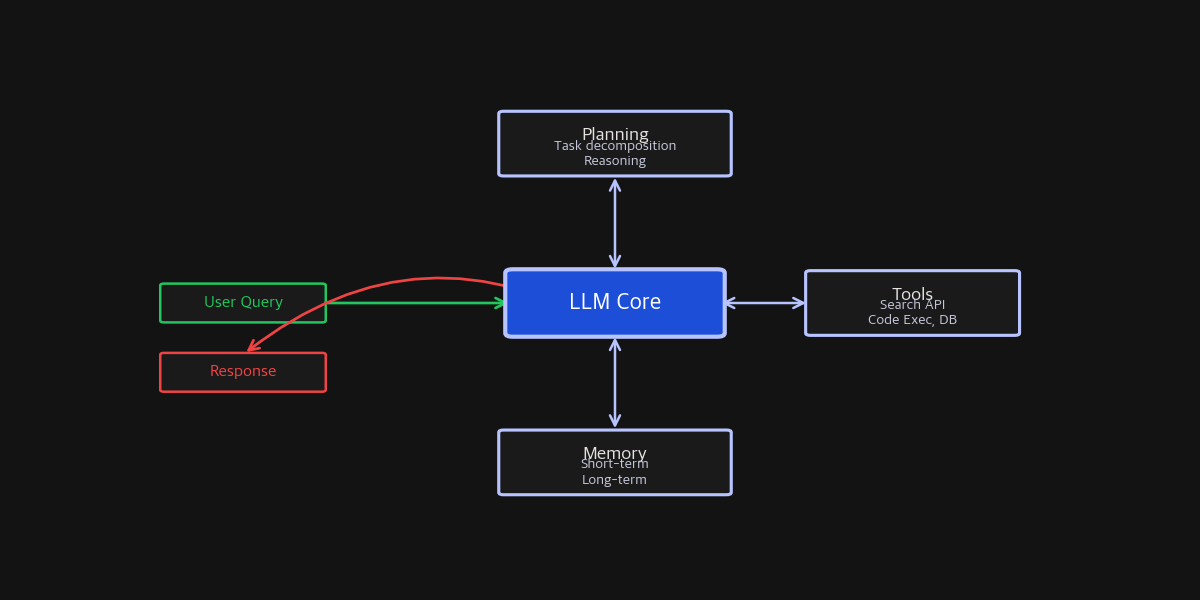

The typical architecture of an LLM agent is composed of the following core components:

- LLM (Core Controller): The brain of the agent. It makes high-level decisions based on user input and current context—deciding, for example, “We need to perform a search” or “Interpret the calculation results.”

- Tools: Functions that perform tasks outside the LLM’s direct capabilities. These include web search, database querying, programming code execution, formula calculations, or controlling specific software. The LLM calls these tools as needed and interprets their outputs.

- Memory: Allows the agent to remember conversation history, intermediate conclusions, or execution results. It distinguishes between short-term memory (current context) and long-term memory (storing past interactions for retrieval), essential for consistency across extended tasks.

- Planning: Decomposes complex goals into smaller, actionable sub-tasks. For example, “Portfolio analysis” can be broken down into “1. Collect latest market data, 2. Calculate individual asset returns, 3. Derive risk indicators (e.g., Sharpe ratio), 4. Summarize findings.”

Illustration of LLM agent architecture. The central LLM acts as the controller, autonomously performing complex tasks through Planning, Tools, and Memory (Ref: RETA-LLM, Liu et al., 2023)

Illustration of LLM agent architecture. The central LLM acts as the controller, autonomously performing complex tasks through Planning, Tools, and Memory (Ref: RETA-LLM, Liu et al., 2023)

Implementation and Operational Considerations

When building or deploying an LLM agent, attention should be paid to:

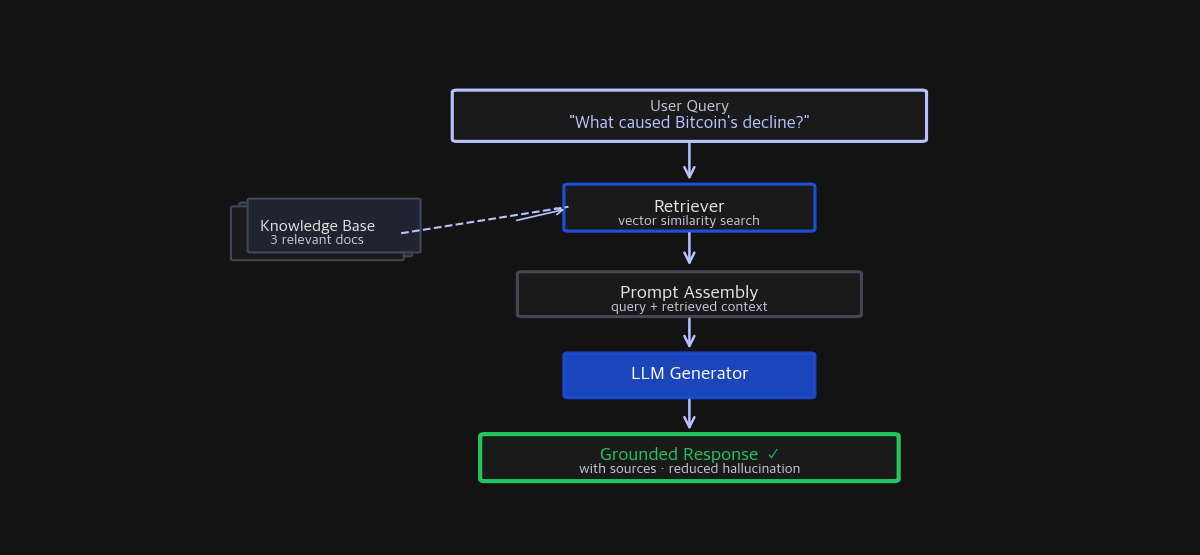

- Reliability and Hallucination Management: LLMs can generate false information. Agents often use tools, especially search, to fetch factual data. However, responses can still be hallucinated during generation. Techniques like Retrieval-Augmented Generation (RAG) are essential. Research such as RETA-LLM shows how to effectively search and reference external knowledge sources to improve accuracy, often demonstrated by responses citing URLs or sources.

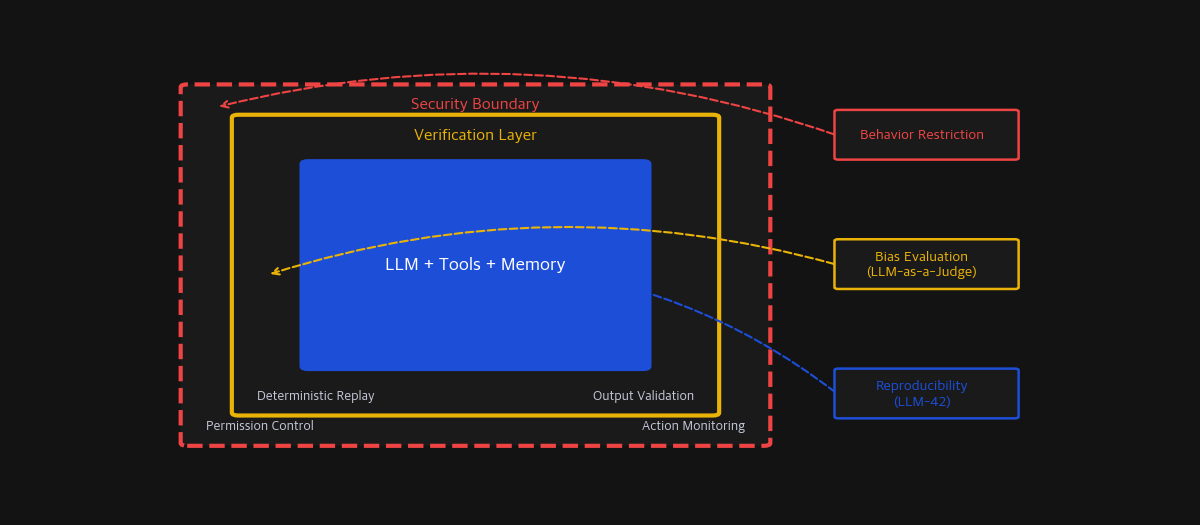

- Judgment Consistency and Bias: The agent might need to evaluate its outputs or options (e.g., which analysis is more rational—A or B?). Using LLMs as judges can lead to inconsistent decisions influenced by prompt design or inherent biases. Studies such as “Systematic Evaluation of LLM-as-a-Judge” investigate methods to enhance judgment reliability.

- Scalability and Costs: Real-time searches, large data processing, and iterative reasoning consume significant computational resources. Papers like “Demystifying AI Platform Design” emphasize designing efficient distributed inference platforms for next-gen LLM systems, highlighting the importance of resource planning.

Limitations and Challenges

While the technology is advancing, several challenges remain:

- Reproducibility Issues: Due to minute computational differences or parallel processing, LLM outputs may vary even with the same input, problematic in fields like finance that demand reproducibility. Research such as “LLM-42” proposes verified speculation techniques that aim to ensure deterministic inference outcomes.

- Complex Reasoning and Long-term Planning Difficulties: Handling multi-step, intricate problems may lead the agent astray or cause inefficient behavior. More refined planning algorithms or human oversight are often required.

- Security and Control Risks: As agents access external tools like internet search or code execution, there’s potential for unintended actions or malicious exploitation. Strict restrictions and monitoring systems are necessary.

FAQ

Q: How does an LLM agent differ from a standard chatbot? A: Chatbots primarily answer questions within short, single-turn conversations. LLM agents, however, are assigned long-term goals and autonomously plan, utilize tools, and remember previous steps to achieve them. Their active and autonomous nature is the key distinction.

Q: How can LLM agents be used in quant investing? A: In theory, they can perform tasks such as: 1) automatically collecting and summarizing news, reports, and social media data (search tools), 2) transforming text data into market sentiment indicators (NLP), 3) generating trading signals based on historical data (data analysis tools), 4) proposing risk-aware portfolio strategies (calculation tools). Despite these possibilities, actual investment decisions face challenges related to reliability, reproducibility, and regulatory compliance.

Q: What skills or tech stack are needed to develop an agent? A: Core LLM APIs (OpenAI, Anthropic, or open-source models), agent frameworks (LangChain, LlamaIndex, AutoGen), necessary tool APIs (search, financial data, code environment), and backend logic for connection and state management. Keeping up with open-source projects and research trends is crucial due to rapid developments.

References

- RETA-LLM: A Retrieval-Augmented Large Language Model Toolkit

- Systematic Evaluation of LLM-as-a-Judge in LLM Alignment Tasks: Explainable Metrics and Diverse Prompt Templates

- LLM-42: Enabling Determinism in LLM Inference with Verified Speculation

- Demystifying AI Platform Design for Distributed Inference of Next-Generation LLM models

Related Posts

Newsletter

Weekly Quant & Market Insights

Get market analysis, quant strategy ideas, and AI & data tool insights delivered to your inbox.

Subscribe →